A Self-Improving Home Agent That Knows Where You Are

A three-layer architecture for a home agent that grows its own capabilities, tracks presence as ambient context, and keeps full autonomy safe by design.

Note: This is a really rough pass. I just needed to get some of it down "on paper".

I've been building a personal home agent — the Jarvis-in-the-walls kind, not the "open a chat window and type" kind. Most of how I use AI day-to-day works the other way around: I open Claude code or a desktop app, I type, it does something, I close it. That's just fine for a lot of work.

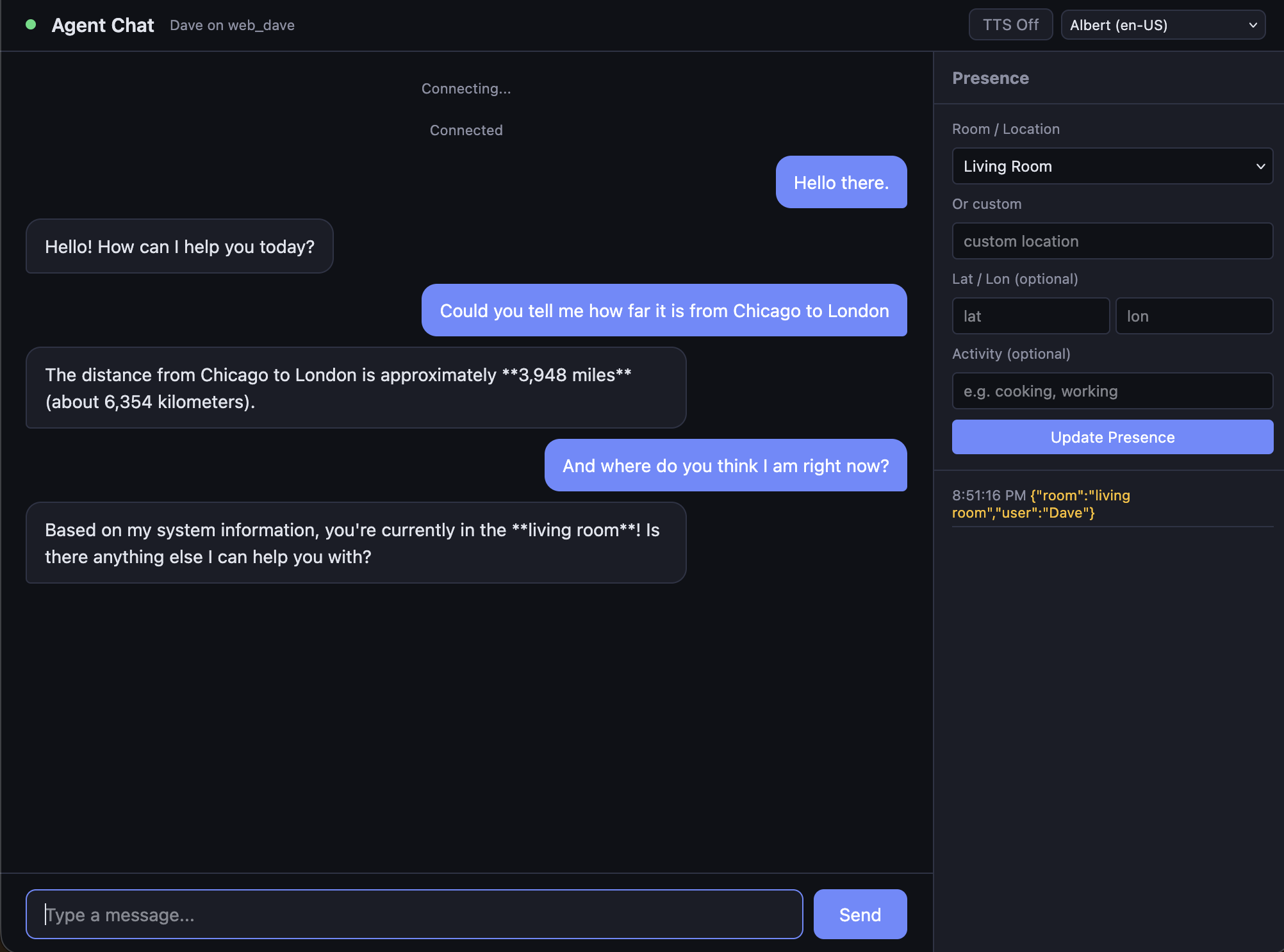

For the agent I want living in my house, I'm after something that's already there. It should be something that notices I'm in the kitchen and replies on the kitchen speaker, and if I wander to the living room mid-thought, follows me there on my phone. I want it to run on my own hardware when possible, use whatever model is best for a given job, and grow its own capabilities when it hits something it can't do. I also want the physical world — where I am, what time it is, what devices are around me, what's still running from an earlier ask... all of it should be ambient context. Basically it should know if I'm miles away or in my living room, and respond accordingly.

This isn't the first time I've taken a run at this. A long while back I had a prototype of a self-improving agent — one that could notice a capability gap and write itself a new tool to fill it. The idea held up. The constraint didn't: I was running everything on a laptop, and it was all crammed into one little API. It worked as a demo. It was never useful enough to keep around. Also it was super dangerous, and I knew it. A friend and I took to referring to it as the DemonBot.

Since then, OpenClaw landed, and the news around it taught me two things... agents that act on your behalf are very cool, and it's important to be careful with agents running tool functions.

So I started my little project over, this time with a different question: what shape would my home system need to take so it can be fully autonomous and reasonably safe, (mostly) run on hardware I own, and treat location and presence as first-class inputs? Getting there meant treating the problem as three independent systems rather than one big agent. The rest of this post is about how those three systems fit together, and why each one is shaped the way it is.

Three layers, not one agent

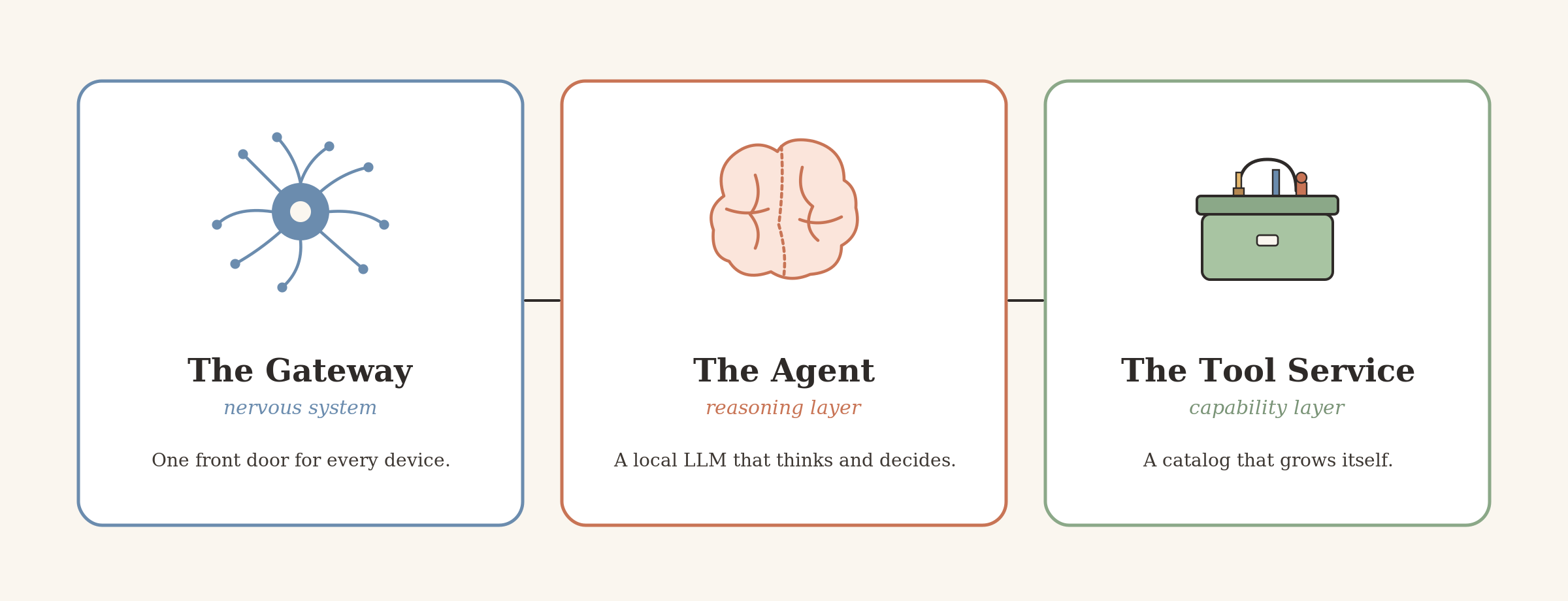

The system has three independent components. They talk to each other over well-defined interfaces, but none of them knows how the others are built:

- The tool service is the capability layer. It hosts a searchable catalog of functions the agent can run, executes them in sandboxed environments, and when asked, writes new functions on demand using an LLM-driven authoring pipeline. It doesn't reason or orchestrate. It's kinda dumb, on purpose.

- The agent is the reasoning layer. A process running a local (ideally) LLM that consumes events from a bus, thinks about them, calls the tool service when it needs something done, and sends responses back out as a... well, chatbot. It runs in a container with deliberately narrow external interfaces: the event bus and the tool service. Nothing else.

- The gateway is the nervous system. An MQTT broker (Mosquitto) plus an HTTP asset server. Every client device — phone, kitchen speaker, chat app, future doorbell — talks to the gateway, never to the agent or tool service directly. Messages flow through the bus in one of four shapes (right now): interaction, presence, time, or process.

The tool service is the hands, the agent is the brain, and the gateway is the nervous system.

The tool service: a capability layer that can grow itself

The design separates capability from reasoning pretty aggressively. The tool service is a FastAPI app on port 8000 with two interfaces: a REST API and a Model Context Protocol endpoint at /mcp/mcp. Authentication is role-based with three tiers of API key: executor can discover and invoke tools, agent can also request new tools be authored, admin can manage secrets, keys, and the catalog. That separation is deliberate — when the home agent eventually runs as a persistent process, it gets an agent key, not admin, so even a compromised or prompt-injected agent can't leak secrets or revoke keys.

Under the hood of the tool service are four main moving parts:

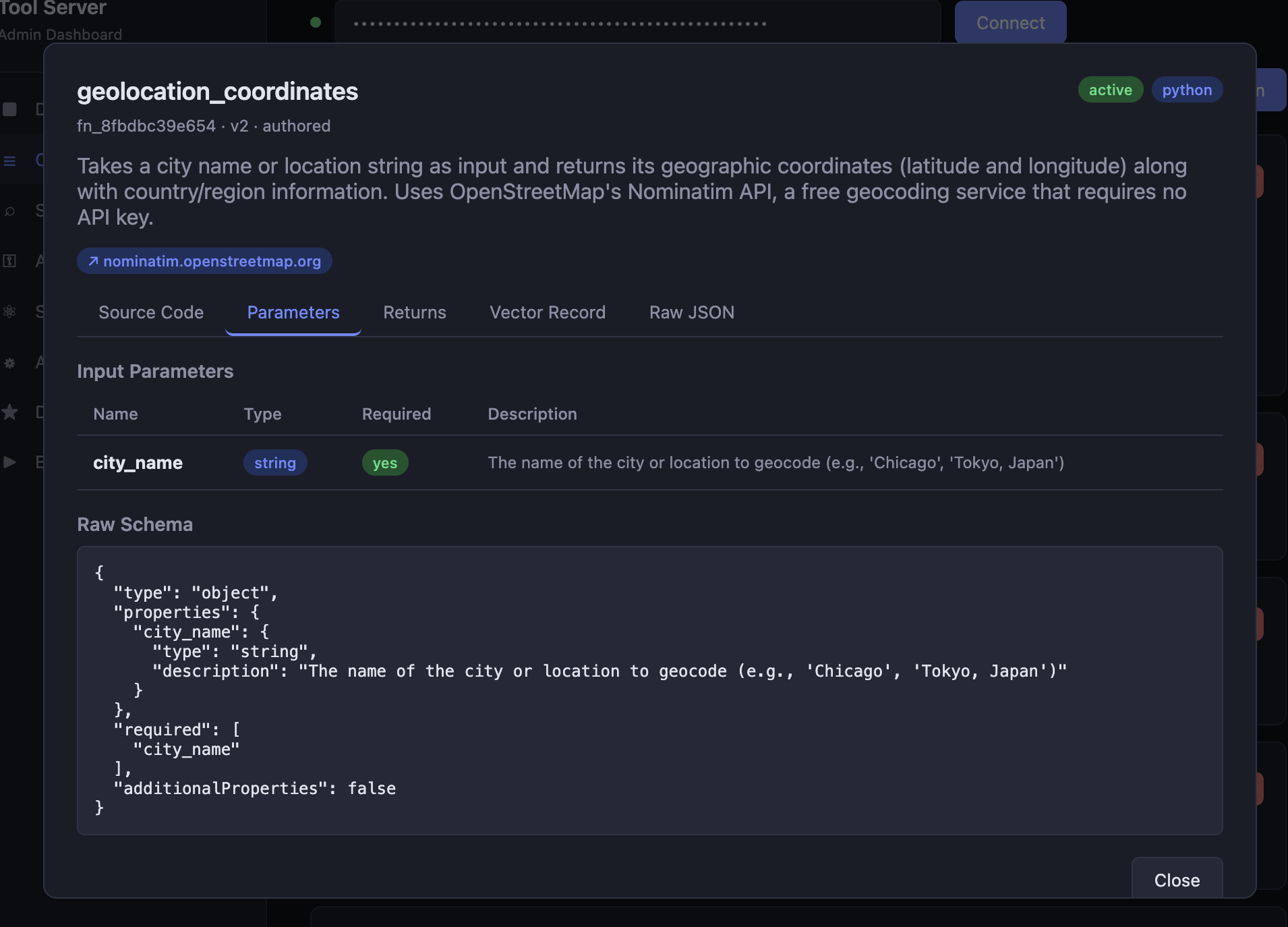

The catalog is the list of available tool functions. Each entry carries a natural language description, a JSON Schema for parameters and return value, the function source, a list of secrets it needs at runtime, and a list of domains it's permitted to reach. Function metadata sits in SQLite; the semantic embeddings live in Qdrant as 768-dimensional vectors.

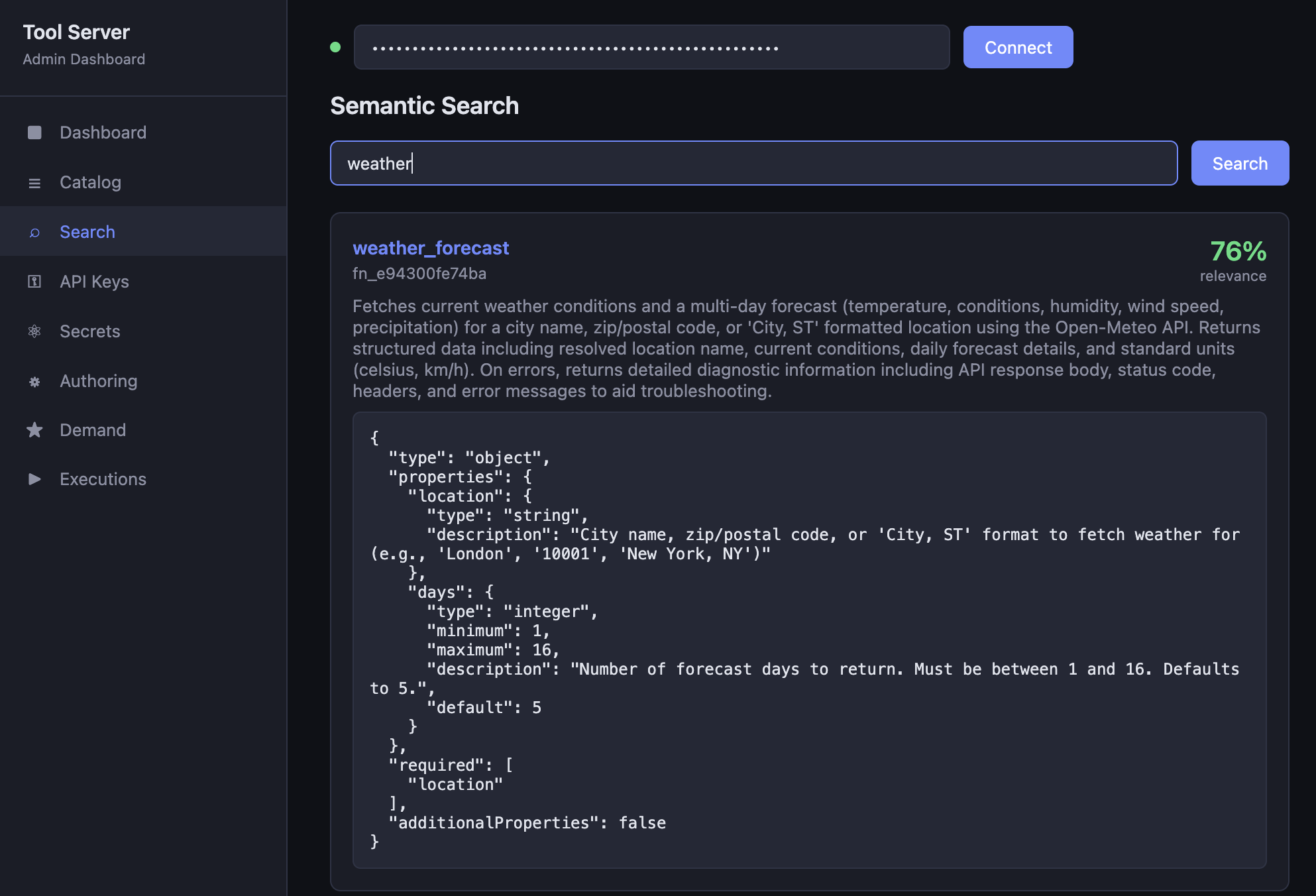

authored tag means this one was written by the pipeline rather than hand-rolled.Semantic search is how tools are discovered. When the agent decides it needs, say, a weather lookup, it doesn't enumerate a hardcoded list — it issues a natural-language query ("weather forecast") and gets back ranked candidates. The same embedding model (Ollama's nomic-embed-text) is used to index the catalog and to embed queries, so the vectors are always comparable. The agent doesn't need to know a tool exists before searching for it, which means newly authored tools become discoverable the moment they're published, with no configuration step anywhere else.

Execution runs the function with the parameters the agent supplies. The design calls for each execution to happen in a per-function sandbox with scoped secrets injected as environment variables and network egress restricted to the function's declared network_permissions. Today the runner is not doing that (for simplicity), but the code is explicitly structured so the Docker-based sandbox can slot in without the rest of the system changing. The function's interface is the same either way: secrets arrive via os.environ, binary inputs and outputs go through a small assets module so functions never touch the filesystem directly. The agent never has direct access to do anything; every capability is mediated through a function that's been authored, reviewed, and published, with its declared blast radius stapled to it.

The authoring pipeline is the part I keep showing people because it's the piece that surprises them. When the agent can't find a tool for what it needs, it doesn't give up — it calls the service and gets back a job ID to poll. The agent has three entry points for this:

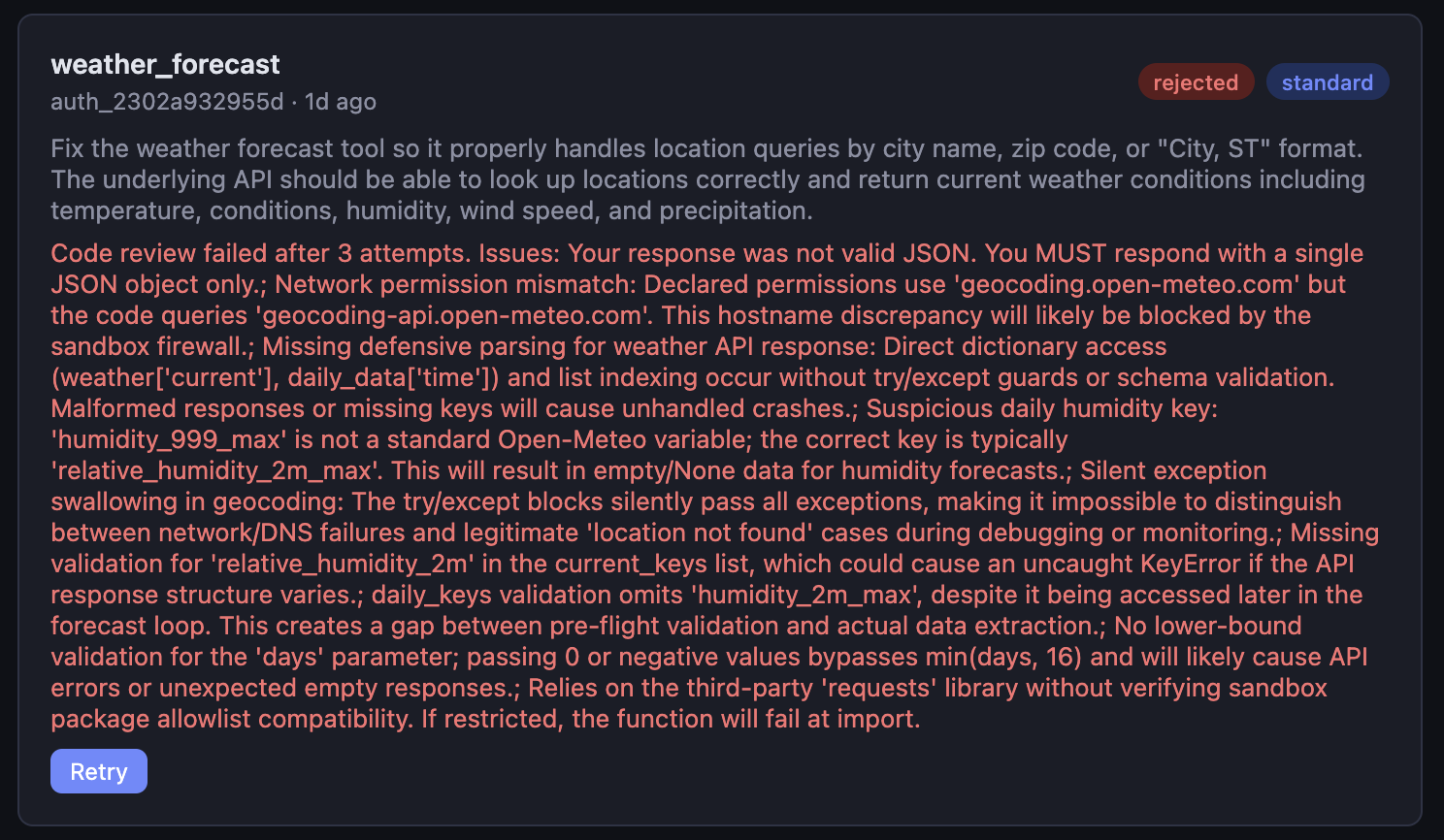

request_tool— hands the service a natural-language description of the capability it needs. Internally the service runs a four-stage pipeline on that request:- analyze — decide complexity, required secrets, network permissions

- generate — Claude Sonnet with an author role writes the function and its schemas (currently)

- review — a second Sonnet call with a reviewer role checks for correctness, security issues, and hardcoded credentials. Up to three retry cycles feed reviewer feedback back to the generator.

- publish — embed the description, index the vector in Qdrant, register the function in the catalog

update_tool— the same pipeline aimed at an existing function, with the current source and schemas handed to the generator as context.check_authoring_status— poll a job while it's running.

The moment a function is published, it's discoverable by the very next search. The agent can request a new tool, have it reviewed for safety, and then turn around and use it in the same conversation. Then it can use it again, tomorrow. The real problem here is that it may declare the need for secrets. More on that in a second.

"The agent can request a new tool, have it reviewed for safety, and then turn around and use it in the same conversation."

This is the piece I'd originally built on the laptop, and it's the piece that's finally earning its keep now that the reasoning model sitting above it is competent enough to make decisions and do tool function calling properly.

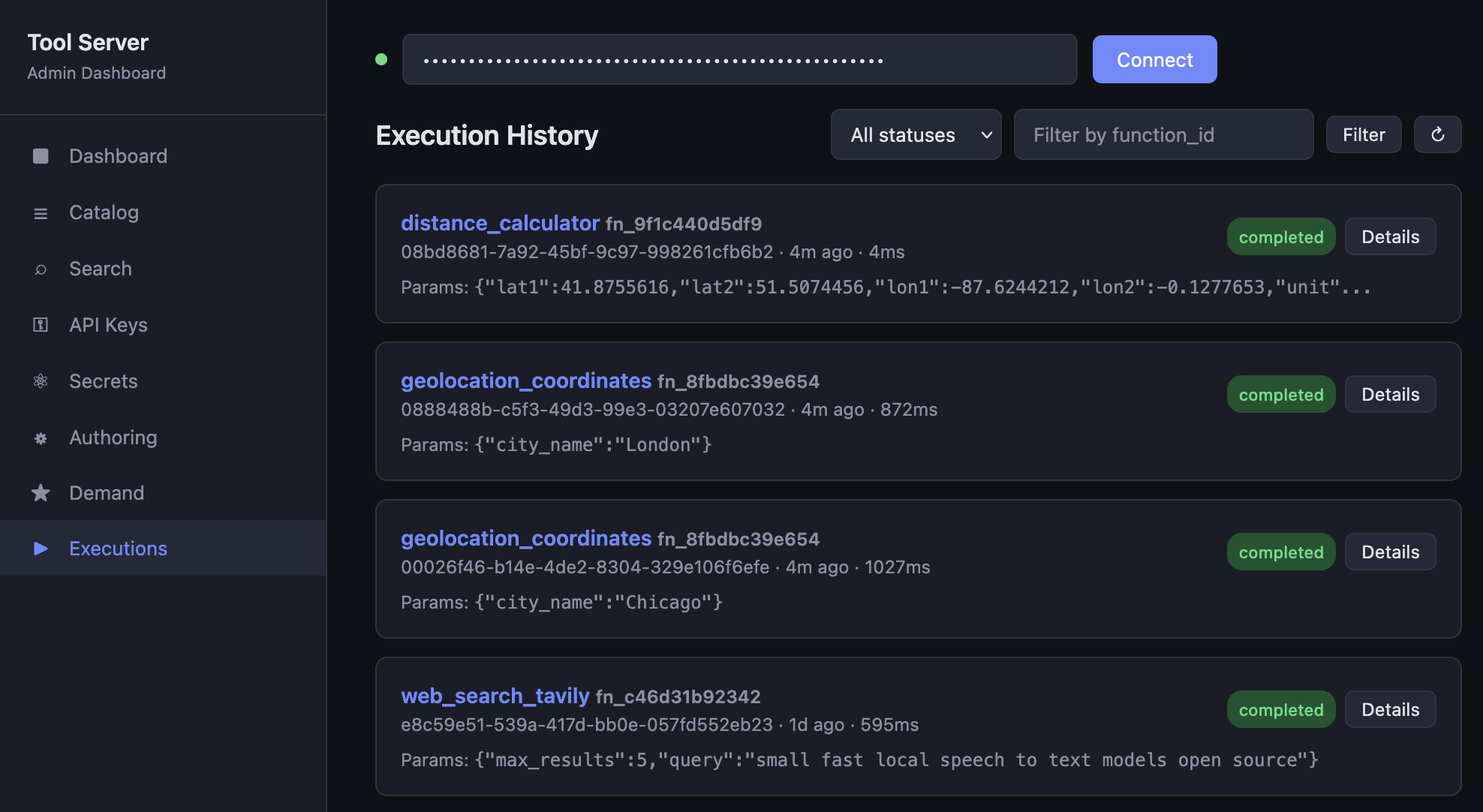

Two smaller features are worth mentioning because they change the day-to-day feel: an admin dashboard at /dashboard/ where you can browse the catalog, trigger authoring jobs, manage secrets and keys, and watch execution logs; and a demand analysis endpoint that clusters search misses — queries where nothing scored above the similarity threshold — so you can see what capabilities agents keep looking for and haven't found. Those clusters turn into one-click authoring suggestions. The catalog grows along the contours of actual use.

Secrets management is handled the way you would in a CI process. Encrypted at rest and provided as env variables at function execution. Right now there's some friction between the authoring pipeline and secrets provision, where a tool can be created but is unusable before I provide credentials. That's a problem to solve.

I also have some issues to solve with requisite modules. The tool functions can be authored with various dependencies that need to be declared, approved, and made available for execution in the (forthcoming) containerized execution.

The gateway: one front door for every client

The gateway is the piece that makes the system feel like something you live with. The core problem it solves is that a conversation can start on any one of several client devices — a speaker in the kitchen, a display in the living room, a chat app on my phone — and the right place to continue that conversation isn't necessarily the place it started. The agent might need to push a follow-up to a different device five minutes later because I've moved, so it needs to know I've moved.

Conversations flow through here, and so do person-location updates. Those events land directly in slots on the agent's state table — replacing the current value or appending to a short list — so the agent doesn't have to reconstruct where someone is by re-reading a transcript.

Mechanically, the gateway runs two services in its own docker compose project: Mosquitto, an MQTT broker on TCP port 1883 and WebSocket port 9001; and a small asset server on port 8100 for shuttling assets. MQTT feels like a reasonable fit for this, so far, for a few reasons. It's pub/sub, so clients don't need to know where the agent is or whether it's reachable — they just publish to a topic and subscribe to the topic they care about. It's cheap on small devices (a Raspberry Pi voice speaker or an ESP32 in a doorbell both do MQTT happily). And it's well-understood by enough open-source projects that when I want to add something new — a Home Assistant bridge, a Matter gateway, a car that reports location — there's usually an existing way to get MQTT out of it.

The agent: reasoning with a state table, not just a conversation

This is where the design maybe gets a little unusual. Most of the agents I use — Cowork, ChatGPT, chat-shaped tools generally — maintain state by growing a conversation history and re-reading resources. Every relevant fact ends up as a message appended to a transcript. That works well for a session I open and close. It's less natural for a persistent home agent that has to track "user is in the kitchen, it's 2:14 AM, the laundry timer has 12 minutes left, and the authoring job from three minutes ago just completed" without burning tokens on stale messages piling up across days.

The agent builds its context differently. It still has your recent conversational history, but it also maintains a state table — a small, structured block of current facts with named slots (time, presence, active device, tasks in flight, last interaction) — and rebuilds it in place on every LLM round. Silent events write to slots directly. This keeps the LLM updated on every turn without bloating context.

"[it] can target the nearest TV without asking 'which one?' — the answer's already in the prompt."

What this is meant to unlock is ambient grounding. When I walk into the kitchen and ask "what's on the TV?" the agent already knows from the state table that I'm in the kitchen/living area, and can target the nearest TV without asking "which one?" — the answer's already in the prompt. Slots can be added without touching the architecture: weather conditions, calendar entries, whether the porch light is on. Each is just another event type that writes to a slot. I haven't done a lot of this, but the design is there for user location and works nicely.

The working loop is straightforward. On a trigger event the agent runs an LLM round with the state table in context. The model searches the tool service for relevant capabilities when needed, picks a candidate, executes it, and decides what to do with the result. If it chains multiple tools, it does so in a single round by looping over tool calls until it has what it needs. If nothing in the catalog fits, it calls request_tool with a natural-language description and polls check_authoring_status politely (with wait_seconds(15) between polls so it isn't hammering the service). When the job completes, it searches again, finds the new tool, and uses it. Then it responds.

The agent runs in a container, and by design the only things it can reach are the event bus and the tool service. No shell, no host filesystem, no direct network access to external APIs. Everything it wants to do in the world it has to do through a tool, and every tool has its blast radius declared up front. This is certainly a trade-off, but it helps contain behavior and put it somewhere where you can naturally make firm decisions about it.

Picking the right model

One practical thing I learned early: this only works with a capable enough model. I spent real time testing small models, and they all had issues. Again, I was working with a Macbook Air. So the model that gets used for the "brain", or "authoring", or embedding... they're all set independently, and can be either local or remote. I'm currently running Qwen 3.6 MoE w/MLX on a Mac Mini M4 Pro with 64 GB, for the brain. This is the kind of thing the laptop couldn't do without going to Anthropic or OpenAI.

The agent process itself, today, is still a test harness (agent/chat.py) that runs either interactively on stdin or as an MQTT subscriber. The full marshalling agent — persistent, memory-aware, handling arbitrary trigger events... it needs real work for a more prod-ready version.

What the three layers unlock together

The individual pieces are each reasonable on their own. The design choices that matter are at the seams.

"I don't have to choose between "fully autonomous" and "doesn't touch anything dangerous," because the system never lets the agent touch anything dangerous in the first place."

Because the tool service is dumb and MCP-exposed, any MCP-compatible agent can use it — which means the reasoning layer is swappable. The test harness talks to a local Qwen 3.6 MoE on the Mac Mini today, and a Claude or GPT-4 agent could plug in just as easily via MCP. The tool catalog travels with me regardless of who's doing the reasoning.

Because the agent has no direct access to devices, APIs, or the filesystem — only to tools, which are themselves sandboxed and scoped — the trust boundary is the container wall, not a per-action permission prompt. I don't have to choose between "fully autonomous" and "doesn't touch anything dangerous," because the system never lets the agent touch anything dangerous in the first place.

Because presence is ambient context rather than conversational input, the agent can make routing decisions that account for the physical world. Kitchen-to-living-room handoffs. "How's that thing going?" meaning the task from 20 minutes ago without me naming it. Long-running authoring jobs that complete quietly and get reported via a process event rather than requiring me to ask.

And because the authoring pipeline exists, capability gaps close automatically for general-purpose needs. The prompt engineering around it pushes the agent to generalize — "a tool that converts between common units of measurement," not "a tool that converts 185 pounds to kilograms" — so the catalog grows along reusable lines rather than collecting single-shot bespoke functions. The demand analysis endpoint catches the patterns I don't notice.

What's not done, and what I'd reconsider

A few things are still missing or sketchy.

The sandbox for tool function execution isn't properly sandboxed yet. It's fast for dev the way it is and it keeps the authoring/review/registration loop tight, but it's explicitly a prototype substrate. The container-based sandbox with network policy enforcement is next, and the code is already set up to accept it.

Memory and context switching are an open problem. A home agent has a continuous life, not a series of isolated Q&A pairs. The plan is a layered memory store — a small task table in SQLite for working memory, recent conversation history per task with embeddings in a separate Qdrant collection, and a long-term semantic store for completed work. But the details of the routing logic ("is this new message about an existing task or a new one?") aren't settled.

Stateful tool functions and assets are wonky. There is an asset delivery mechanism, but it isn't elegant. I need something better for this.

None of these are architectural redesigns. They're all fillable slots.

Closing

The short version of all of this: a home agent is a different shape of problem from a chat-window session, at least for the way I want to use one. The architecture has to reflect that. A place for capabilities to live that isn't coupled to the reasoning loop. A message fabric that treats presence, time, and process events as first-class and lets any client — whether it's a speaker bolted to the kitchen wall or the phone in my pocket — participate with a tiny contract. A reasoning layer that consumes ambient context without growing a transcript. A trust model that makes full autonomy safe by default, rather than bolting safety on after the fact. And if I want the thing to keep being useful over years rather than hours, it has to be able to grow its own hands.

The first time I tried this I just didn't have the tools. This time I think I do. I'll post again when everything's running in its permanent home and I can wander around the house talking to it.